Reviews:Directed evolution/Library construction

Chemical and biochemical strategies for the randomization of protein encoding DNA sequences: Library construction methods for directed evolution

Curators

Cameron Neylon(what is a curator?)

- Sign up!

- Subscribe to the RSS feed for this page to keep up to date with changes.

Notice on WikiReviews

This OpenWetWare WikiReview is a derivate work of Neylon,Nucleic Acids Research,V32, pp1448-1459, 2004. Significant modifications have been made to the text and pictures. The history of all changes from the original posted text can be found here. Nucleic Acids Research operates an open access policy which allows for non-commercial use under an Open Access non-commercial, by attribution, license. Therefore no commercial derivatives of this work should be made without a query being made to Nucleic Acids Research.

Modifications

If you think a recently published paper deserves mention in this review, please list it below in this section or click on the discussion tab above and add a comment to the discussion page. Even better -- find a good place to mention it in the article and make the edit! If you would like to get involved in the curation process then add your name to the mailing list for this review and dive in on the discussion page.

Abstract

Directed molecular evolution and combinatorial methodologies are playing an increasingly important role in the field of protein engineering. The general approach of generating a library of partially randomized genes, expressing the gene library to generate the proteins the library encodes and then screening the proteins for improved or modified characteristics has successfully been applied in the areas of protein–ligand binding, improving protein stability and modifying enzyme selectivity. A wide range of techniques are now available for generating gene libraries with different characteristics. This review will discuss these different methodologies, their accessibility and applicability to non-expert laboratories and the characteristics of the libraries they produce. The aim is to provide an up to date resource to allow groups interested in using directed evolution to identify the most appropriate methods for their purposes and to guide those moving on from initial experiments to more ambitious targets in the selection of library construction techniques. References are provided to original methodology papers and other recent examples from the primary literature that provide details of experimental methods.

Introduction

Directed molecular evolution has earned a secure position in the range of techniques available for protein engineering (for recent general reviews see [1, 2, 3, 4, 5]). Despite continued advances in our understanding of protein structure and function it is clear that there are many aspects of protein function that we cannot predict. It is for these reasons that ‘design by statistics’ or combinatorial strategies for protein engineering are appealing. All such combinatorial optimisation strategies require two fundamental components; a library, and a means of screening, or selecting from, that library. The application of combinatorial strategies to protein engineering therefore requires, above all else, the construction of a library of variant proteins. The most straightforward method of constructing a library of variant proteins is to construct a library of nucleic acid molecules from which the protein library can be translated. This also has the advantage that (as long as a link between protein and nucleic acid is maintained) the identity of any selected protein can be directly determined by DNA sequencing. Much of the appeal of directed evolution of proteins lies in the fact that the coding information is held in a molecular medium which is straightforward to amplify, read, and manipulate, while the functional molecule, the protein, has a rich chemistry that provides a wide range of possible activities. The methodology of choice in a directed evolution experiment is therefore to construct a library of variant genes, and screen or select from the protein products of these genes. Advances in screening methodology have been reviewed elsewhere and will not be discussed here (see reference [6]} for a recent review and papers in [7] for detailed protocols). A wide variety of methods have been developed for the construction of gene libraries. The most recent collection of detailed protocols may be found in reference [8]. The purpose of this review to give an overview of the different methods available, and how they relate to each other, as well as how they may be combined.

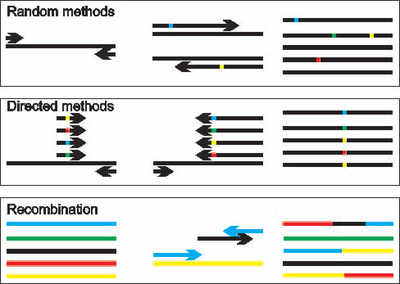

Methods for the creation of protein-encoding DNA libraries may broadly be divided into three categories (Figure 1). The first two categories encompass techniques that directly generate sequence diversity in the form of point mutations, insertions or deletions. These can be divided in turn into methods where changes are made at random along a whole gene and methods that involve randomisation at specific positions within a gene sequence. The first category, randomly targeted methods, encompasses most techniques in which the copying of a DNA sequence is deliberately disturbed. These methods, which include the use of physical and chemical mutagens, mutator strains and some forms of insertion and deletion mutagenesis as well as the various forms of error-prone PCR (epPCR), generate diversity at random positions within the DNA being copied. The second category of methods targets a controlled level of randomisation to specific positions within the DNA sequence. These methods involve the direct synthesis of mixtures of DNA molecules and are usually based on the incorporation of partially randomised synthetic DNA cassettes into genes via PCR or direct cloning. The key to these methods is the introduction of diversity at specific positions within the synthetic DNA. Thus these two approaches to generating diversity are complementary. The third category of techniques for library construction are those that do not directly create new sequence diversity but combine existing diversity in new ways. These are the recombination techniques, such as DNA shuffling [9, 10] and Staggered Extension Process [11] that take portions of existing sequences and mix them in novel combinations. These techniques make it possible to bring together advantageous mutations while removing deleterious mutations in a manner analogous to sexual recombination. Also belonging to this category are methods such as Iterative Truncation for the Construction of Hybrid Enzymes [12] that make it possible to construct hybrids proteins even when the genes have little or no sequence homology. It should be noted that while these techniques do not in principle produce new point mutations they are generally dependant on a PCR reconstruction process that can be error prone, and new point mutations are usually produced as a by-product of these techniques.

This review will discuss each of these categories of methodology in turn, highlighting the advantages and disadvantages of each methodology, the characteristics of the libraries that each method would ideally produce and the known or likely deviations from this ideal in reality. The patent status of different techniques will also be discussed.

Generating diversity throughout a DNA sequence

In vivo mutagenesis

Generation of mutations by directly damaging DNA with chemical and physical agents has been used to dissect biological systems for many years and will not be discussed in detail here. However it does provide a valuable point of comparison to other methodologies. The basis of mutagenesis by UV irradiation or alkylating agents is that the damaged DNA is incorrectly replicated or repaired leading to mutation. The idea of relaxing the, usually very high, fidelity of DNA replication is also exploited in mutator strains. These bacterial strains have defects in one or several DNA repair pathways leading to a higher mutation rate. Genetic material that passes through these cells accumulates mutations at a vastly higher rate than usual. This is an effective and straightforward way of introducing mutations throughout a DNA construct. However, in common with physical and chemical mutagens the mutagenesis is indiscriminate. Thus the construct carrying the gene of interest as well as the gene itself, and indeed the chromosomal DNA of the host cell, suffer mutation. The process of mutagenesis using mutator strains can also be quite slow as the level of mutagenesis is controlled by the length of time the DNA spends in the strain. Constructing a library with mutagenesis levels of one or two nucleotide changes per gene can require multiple passages through the mutator strain. It is primarily this second disadvantage that has lead to the almost universal use of error-prone PCR methods for the generation of diversity for directed evolution experiments. However the simplicity of mutator strains will appeal to groups entering the area of directed evolution, particularly those with less experience in molecular biology. They may also be the most appropriate methodology when a simple initial experiment is required to generate preliminary results. The XL1-Red strain, commercially available from Stratagene, has been used in most experiments that utilise this strategy (for examples see [13, 14]). The use of mutator strains for library construction has been reviewed [15].

Error-prone PCR

The error-prone nature of the polymerase chain reaction has been an issue almost since the initial development of PCR. However, even the relatively low fidelity Taq DNA polymerase is too accurate to be useful for the construction of combinatorial libraries under standard amplification conditions. Increases in error rates can be obtained in a number of ways. One of the most straightforward and popular methods is the combination of introducing a small amount of Mn2+ (in place of the natural Mg2+ cofactor) and including biased concentrations of dNTPs [16, 17]. The presence of Mn2+ along with an over-representation of dGTP and dTTP in the amplification reaction leads to error rates of around one nucleotide per kilobase in the final library (see references [17, 18, 19, 20] for examples that give detailed protocols). The level of mutagenesis can be controlled within limits by the proportion of Mn2+ in the reaction or by the number of cycles of amplification [17]. For higher rates of mutagenesis Zaccolo and Gheradi [21] report a number of nucleoside triphosphate analogues that lead to high and controllable levels of misincorporation. Performing the reaction in the presence of these modified bases leads to mutation rates of up to one in five bases [21, 22]. In addition to these ‘home made’ approaches kits are available from both Clontech (Diversify PCR Random Mutagenesis Kit) and Stratagene (GeneMorphII System). The Clontech system is based on modified Taq polymerase and provides ready mixed reagents for modifying mutation rates by changing the concentrations of Mn2+ and dGTP. The Stratagene GeneMorphII system is based on a combination of Taq and a highly error-prone polymerase (Mutazyme) which is designed to give a more even mutational spectrum than Taq alone. This kit is straightforward to use and comes with detailed instructions and is therefore appealing to those entering the area. The level of mutagenesis is controlled by the concentration of template used and the number of serial amplification reactions performed. The kit can also be purchased with the materials for a three step megaprimer based cloning process.

A range of other approaches have been described for generating random variation along a genetic sequence, including the generation of abasic sites, followed by error-prone 'correction' [23], the mutagenesis of an mRNA sequence via a error-prone RNA replicase followed by selection of active sequences by ribosome display [24].Using an error-prone rolling circle amplification, as opposed to PCR, can remove the need for ligation of the product into a plasmid prior to transformation [25] (protocol at Nature Protocols [26]). An alternate approach is PCR amplification of the whole plasmid under error-prone conditions followed by self ligation and transformation [27].

The bias problem

The methodologies for error-prone PCR all involve either a misincorporation process in which the polymerase adds an incorrect base to the growing daughter strand and/or a lack of proofreading ability on the part of the polymerase. The inherent characteristics of the polymerase used mean that some types of error are more common than others [17, 21]. The appeal of the error-prone PCR approach is that it leads to randomisation along the length of the DNA sequence, ideally leading to a library in which all potential mutations are equally represented. However the construction of such an ideal library relies on all possible mutations occurring at the same frequency. The fact that specific types of error in the amplification process are more common than others means that specific mutations will occur more often than others leading to a bias in the composition of the library. This ‘error bias’ means that libraries have non-random composition with respect to both the position and the identity of changes. Error-prone PCR using Taq and the Stratagene GeneMorph kit have different biases making it possible to use a combination of techniques to construct a less biased library [28, 29, 30]. The new generation of the Stratagene GeneMorph kit (GenemorphII Random Mutagenesis kit) claims a significantly reduced bias, presumably due to the combined use of Taq and Mutazyme.

There are two other sources of bias in libraries constructed by error-prone PCR. The first of these is a ‘codon bias’ that results from the nature of the genetic code. Error-prone PCR introduces single nucleotide mutations into the DNA sequence. Even without error bias single mutations will lead to a bias in the variant amino acids that the mutated DNA encodes. For example, single point mutations in a valine codon are capable of encoding phenylalanine, leucine, isoleucine, alanine, aspartate, or glycine. To access the codons for other amino acids either two point mutations (C, S, P, H, R, N, T, M, E, Y) or even three (Q, W, K) are required. The result of this codon bias is that specific amino acid changes will be much less common than others in any library constructed by error-prone PCR. Analysis of mode systems suggests that in a library of 109 sequences the most frequent 100 make up 7% of the library [31]. It can be argued that this bias in the genetic code is optimised to ensure that amino acids substitutions are biased towards those that are less likely to cause loss of function [32]. However where the object is to efficiently screen a specific subset of possible mutations there is no advantage in being forced to screen 100 valine to alanine conversions to be sure that the valine to tryptophan mutation is in fact deleterious.

The final source of bias, ‘amplification bias’, is a characteristic of any mutagenesis protocol that involves an amplification step, particularly PCR amplification. PCR is by its nature an exponential amplification process. If, in an imaginary PCR amplification reaction from a single molecule, a mutation is introduced in the first copying step, then this mutation will be present in 25% of the product molecules. Such an extreme situation is unlikely to arise in a real experiment but the point is clear. Any molecule that is copied early in the amplification process will be over-represented in the final library. Owing to the exponential nature of the amplification such an over-representation can be serious. However, such bias can be difficult to detect by sequence analysis, particularly in large libraries. The problem can to some extent be overcome by performing several separate error-prone PCRs and combining these to construct the final library. Another strategy is to reduce the number of amplification cycles, but changing the number of amplification cycles is also one of the most straightforward ways of controlling the level of mutagenesis. A combination of multiple amplification reactions and reducing the number of amplification cycles is the most effective means of combating this form of bias. Amplification bias could be a serious problem when statistical conclusions are being sought from experiments. It is not, however, a significant issue when the aim is the improvement of protein characteristics (as long as results of the desired quality are obtained).

Introducing controlled deletions and insertions at random locations: a new type of sequence diversity

Error-prone PCR protocols are effective at changing the DNA sequence; converting one nucleotide to another. In contrast single nucleotide insertions, and more commonly deletions, are produced but at a much lower rate. This is desirable for library construction as single nucleotide insertions and deletions lead to frameshifts which complicate analysis and screening, and often simply produce truncated proteins. However the insertion and deletion of amino acids in the protein structure is clearly a desirable type of diversity to explore providing a new ‘dimension’ in the protein sequence search space [33]. Although it is possible to introduce or remove [34] the codons for amino acids at specific positions within the protein-encoding DNA by oligonucleotide based methods (see below) it is only recently that techniques have been reported for the insertion and deletion of codons at random locations throughout the gene. There has therefore been relatively little investigation of the value of random insertion and deletion in directed evolution experiments to date. The availability of some recently developed techniques [35, 36] now makes these investigations possible.

The introduction of modified transposons into DNA sequences as a means of creating controlled insertions at random locations has been reviewed by Hayes and Hallet [37]. These methods require specialist DNA constructs and are limited in that only specific sequences can be introduced. A general method for creating deletions and repeats at random locations and of random lengths is described by Pikkemat and Janssen [38]. This method utilises Bal31 nuclease to delete DNA from one end of the template gene. The 5’ and 3’ ends of the gene are treated in separate pools and then recombined by ligation. The ligated products will either contain deletions or sequence repeats. The process is relatively straightforward and easy to perform. One disadvantage of this approach is that the majority of 5’ and 3’ fragments will be ligated out of frame leading to non-sense mutations. The other is that sequence material is limited to that of the source DNA. However, as the authors argue [38], this is a known pathway for natural evolution, and in particular it is known to be important in the evolution of their system, the haloalkane dehalogenases.

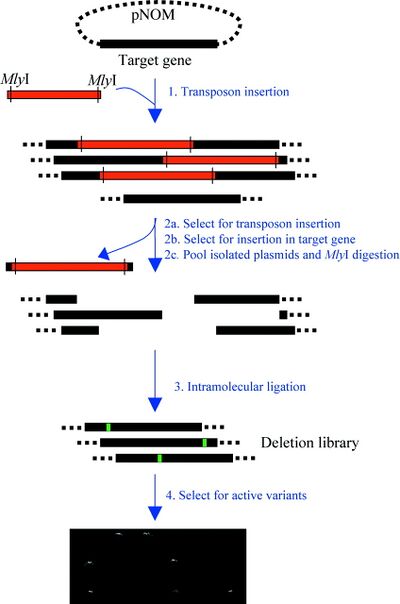

In 2002 general methodology for inserting or deleting sequences of defined length and identity was reported by Murakami et al. [35, 36]. This method, termed “Random Insertion/Deletion” (RID) mutagenesis, enables the deletion and subsequent insertion of an arbitrary number of bases of arbitrary sequence at random along a target gene sequence. The disadvantage of the RID method is that it is a complex multistep procedure requiring a significant investment in resources, particularly in the time to insure that each step is working correctly. Other potentially simpler methods have followed. The transposon based method described by Jones [39, 40] uses transposon based insertion with a cassette containing two MlyI restriction sites. After transposon insertion, cutting with MlyI and religation removes both recognition sites and the majority of the transposon insert. The cassettes can be designed to enable insertion or deletion mutations with controlled sequence. The RAISE method utilises a shuffling (see below) type approach where the template DNA is fragmented but with the additional step of adding short random sequences via the action of terminal deoxynucleotidyl transferase (TdT)[40]. RAISE has the advantage of simplicity and can also lead to further recombination when the DNA fragments are reconstructed into the full length gene. However it does not provide the control of the identity of the inserted or deleted sequence provided by RID or the approach of Jones.

Relatively few reports have exploited the potential of these methods for generating libraries. However the libraries obtained from using these methods generally have a high number of functional variants and identify beneficial mutations that are not accessible by conventional error-prone mutagenesis approaches [35, 39, 40].

Summary

Error-prone PCR methods remain one of the most popular approaches for generating libraries for directed evolution experiments. The ease with which mutations can be generated by modifying PCR conditions (addition of Mn2+, biasing of dNTP concentrations or addition of dNTP analogues) makes these methods appealing for any laboratory that is approaching directed evolution as a means to an end. The use of mutator strains is somewhat less popular but may be particularly useful for laboratories with less experience in molecular biology. Most of these methods produce libraries with a bias in the type of nucleotide mutations (error bias), a bias in the types of amino acid changes seen in the protein (codon bias) and a bias in the distribution of specific sequences in the library (amplification bias). The error bias can be overcome to a certain extent by combining polymerases with a different bias. Most reported directed evolution experiments have used either error-prone PCR, some form of shuffling (below) or a combination of both. More complex approaches are required for generating random insertions and deletions but this form of diversity appears to provide valuable variants in the relatively small number of examples that have been investigated. This new dimension of diversity provided by amino acid insertions and deletions remains largely unexplored.

Directing diversity: Oligonucleotide-based methods

The techniques described above all, at least ideally, generate diversity along the length of a DNA sequence. The techniques discussed in this section are at the opposite extreme and at their simplest randomize a single position in the target gene. All of these techniques are based on the incorporation of a synthetic DNA sequence within the coding sequence. The synthetic DNA is randomized at specific positions and this randomization is incorporated directly into the target gene. There are therefore two elements to all of these techniques: first the means by which the DNA itself is randomized during synthesis, and secondly the methodology for incorporating the synthetic oligonucleotide. These two issues will be discussed separately, although some issues raised by one can be dealt with by the other and vice versa.

The synthesis of randomized oligonucleotides

The value of oligonucleotide-based mutagenesis is that control over the chemistry of DNA synthesis allows complete control over the level, identity and position of randomization. Thus, if an oligonucleotide can be synthesized as a mixture, or if a number of synthetic oligonucleotides can be mixed, then this can be incorporated directly into a complete gene sequence. There are a wide range of techniques from the field of combinatorial chemistry that are available to a combinatorial biologist. Indeed, the biologist has an advantage over the chemist as a mixture of genes can be readily separated for analysis by transformation into bacterial cells and isolation of single transformed colonies.

The synthesis of degenerate oligonucleotides is well established; synthetic primers incorporating mixtures of any combination of the four natural bases at any position can be ordered directly from most suppliers. Such pieces of synthetic DNA can be used to completely randomise a specific position within a gene. The synthesis of ‘doped’ oligonucleotides where a small proportion have a mutation at a specific position or positions is a slightly more specialist process but oligonucleotides of this type can be ordered from most suppliers. These are used to generate libraries where the randomisation is spread out but still targets those positions that are doped in the primers. Any synthetic process where a number of reagents are used as mixtures is susceptible to bias arising from greater incorporation of one reagent than another. Quantitative studies indicate that where synthesis is carefully controlled and/or uses optimised reagents (e.g. Transgenomic’s ‘Precision Nucleotide Mix’) then this bias is small in synthetic DNA libraries [41, 42]. It should be noted that this relative lack of bias is not maintained when these libraries are cloned, although the reason for this is not clear [42].

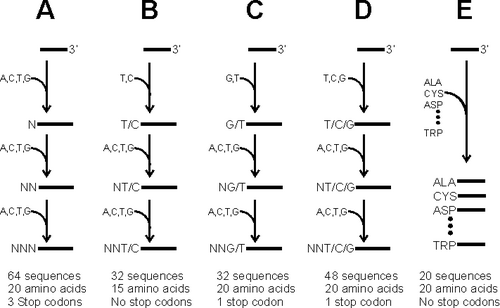

Another bias problem arises due to the mismatch between the base-by-base synthesis of the oligonucleotide and the triplet nature of the genetic code. To randomize a codon so that it can encode all 20 amino acids, a mixture of all four bases is required at the first two positions and at least three bases in the third position. This in turn leads to a form of codon bias as there are six times as many codons for some amino acids, such as serine, than others such as tryptophan and methionine. In addition, there is the potential for the introduction of stop codons. This can be avoided by limiting the mixture of bases at the third position of the codon to T and C, but this means that codons for a range of amino acids will not be present (Fig. 3). A compromise is to randomize the codon with T, C or G in the final position, giving only one stop codon in every 48 primers, and encoding all 20 amino acids or NNG/T or NNG/C which provide all amino acids with slightly more common stop codons. Another result of this form of codon bias is that it is difficult to insert codons for a subset of amino acids if this is desirable.

A number of solutions have been developed to this problem. The simplest solution is to synthesise the DNA for each desired mutation separately. For relatively small libraries the falling cost of oligonucleotide synthesis makes this possible with the size of the library limited by the size of the budget and not by technical considerations. The oligonucleotides can then be either mixed or used separately to construct the gene library. A second solution is to use trinucleotide phosphoramidites in the synthesis of the oligonucleotides. This solves the problem of the codon bias by synthesising the DNA one codon at a time. If it is desired to completely randomise one amino acid then a mixture of twenty codons can be added (Figure 3). If a low level of mutagenesis is required then the mixture will be present at a lower concentration than the wildtype codon and if a subset of amino acids is desired then this is easily accommodated by including only the desired codons. However the trimer phosphoramidites are not straightforward (or cheap) to prepare. A number of syntheses are described in the literature [43, 44, 45] with probably the most appealing being the large scale solid phase synthesis described by Kayushin et al. [46]. These reagents have recently become commercially available from Glen Research making the strategy more accessible to the general user. Twenty specific trinucleotide phosphoramidites are available, one for each amino acid, as well as a mixture of all twenty prepared for direct use in oligonucleotide synthesis.

The difficulty involved in synthesising and using trinucleotide phosphoramidites led Gaytan et al. to develop a strategy based on orthogonal protecting groups [47]. In this strategy the wildtype sequence is synthesised using standard acid labile trityl protecting groups but at each point where mutagenesis is desired the penultimate phosphoramidite is spiked with a small proportion of Fmoc protected monomer. The synthesis of the wildtype codon continues with standard trityl chemistry. Once the wildtype codon is complete the base labile Fmoc groups are removed and the mutagenic codon is synthesised with Fmoc chemistry. This can also be used to remove stop codons while still maintaining access to codons for all twenty amino acids. The Fmoc protected phosphoramidites are relatively straightforward to synthesise [48, 49] in comparison to trinucleotide phosphoramidites making this strategy more accessible and the group have more recently improved the methodology by developing Fmoc protected dinucleoside phosphoramidites which reduce the number of additions required while still maintaining access to 18 of the 20 amino acids [49]. A similar but slightly simpler approach can also be applied to generating random codon deletions by carrying out a substoichiometric Fmoc protection prior to the addition of the first base in the target codons followed by Fmoc deprotection after the addition of the last base in the codon [50]. However it is still limited to laboratories with access to a DNA synthesiser and synthetic experience.

Another strategy, which is logically similar, is derived from classical split and mix approaches. In this case instead of being differentiated by protecting groups a proportion of oligonucleotides destined for mutagenic codons are physically separated from the wildtype sequences [51, 52]. Again this methodology requires access to a DNA synthesiser and, as originally reported, required extensive manipulations to allow for the removal and recombining of the solid support. Using this type of approach it is possible to prepare a library of oligonucleotides that target multiple positions with no codon bias, and with only one codon being randomised in each DNA sequence, without requiring any reagents beyond those required for standard DNA synthesis.

Overall while modern chemistry makes a high level of control over the makeup of a library of oligonucleotides possible most of these sophisticated approaches require access to a DNA synthesiser at a minimum and can require considerable expertise and resources for synthesis as well. These techniques are likely to remain the preserve of specialist laboratories. Most users are restricted to the choice between NNN, NNT/C, NNT/G, NNG/C and NNT/G/C codons in their oligonucleotides or the option of ordering a large number of individual mutagenic sequences. In most cases this is not a serious restriction as the most common use of oligonucleotide directed mutagenesis is to randomise a limited number of positions. Most commonly a primer directed method is used to completely randomise specific positions that have been identified by screening libraries constructed by error-prone PCR [32, 53, 54, 55]. As the library sizes are relatively small an inefficiently constructed library is not a serious drawback. The problem of stop codons and codon bias only becomes a serious issue when the libraries are very large and efficiency is crucial, or where statistical analysis is required.

Incorporating synthetic DNA into full-length genes

Regardless of how an oligonucleotide is synthesised, whether a single codon or several are randomised, and whether the level of mutagenesis is high or low, it is necessary to incorporate the synthetic DNA sequence into a full-length gene. A wide range of methods is available based on conventional site directed mutagenesis techniques and these will not be reviewed in detail here. The basic requirement of incorporation is that the level of wildtype sequence contamination should be as low as possible. For this reason PCR based techniques such as strand overlap extension (SOE) and megaprimer based procedures are usually the method of choice (for examples and protocols see [56, 57, 58, 59, 60, 61]). Some groups have used mutagenic plasmid amplification (MPA, marketed in kit form by Stratagene as QuikChange system) and related methods successfully. A number of papers discuss improvements to the QuikChange system including the use of megaprimers [62, 63] meaning only one mutagenic primer is required and improvements in primer design approach [64, 65]. An alternative approach utilising the insertion of Type II restriction endonuclease recognition sites requires two PCR reactions but following digestion with appropriate restriction enzymes allows ligation directly to generate the desired plasmid construct [66]. Synthetic primers can also be incorporated via a number of recombination strategies and these will be discussed below.

The problem of bias arises again with primer incorporation procedures. As discussed above, with any procedure that includes an exponential amplification there is the potential problem of amplification bias. If a great effort has been expended on removing bias from the oligonucleotide library, then reducing it at later stages of the construction process is clearly desirable. An additional problem with primer incorporation is that those sequences with greatest similarity to the wild-type DNA sequence will be incorporated more efficiently than those that diverge more. In a PCR-based strategy primers mutagenized near the 5'-end will be more efficiently incorporated than those modified near the 3'-end. Careful design of primers and the provision of a reasonable length of fully annealing sequence at the 3'-end can reduce the risk of this occurring. Reduction in amplification bias is again best achieved by performing a number of separate amplifications with the smallest possible number of cycles.

Simple methodologies are capable of randomising a gene in a single region but the length of oligonucleotides that can be reliably synthesised limits the size of the region that can be randomised. Randomising multiple regions requires either multiple rounds of mutagenesis or more sophisticated methods. One approach is to utilise a chain extension approach with multiple mutagenic primers binding to a template. Seyfang and Jin [67] use unique anchor sequences in primers that bind to the 5' and 3' end of the template gene to provide selective amplification following extension and ligation of the mutagenic primers. A similar approach was described to generate a full length cow pea mosaic virus with multiple mutagenesis sites [68]. Inserting Type II restriction enzyme sites with different overhangs can also enable multiple fragments to be readily recombined [66]

The Assembly of Designed Oligonucleotides method [69] and Synthetic Shuffling [70] utilise a shuffling type approach with synthetic oligonucleotides. For specific targeted sites this can be combined with template DNA <or converselt the QuikChange system can be used with multiple mutagenic primers to randomise multiple positions [71]. Another, similar, approach is to construct overlapping gene segments by PCR with mutagenic primers and then reconstruct these in an overlap extension reaction. If a small number of gene fragments (up to 4) are used then the reconstruction can be performed by strand extension rather than PCR amplification (i.e. without external primers). This has the advantage of reducing the risk of amplification bias. There is a linear rather than exponential amplification bias in the construction of the fragments as the randomised region is in the primers. Using PCR rather than strand extension in the second step will lead to exponential bias towards those full length fragments copied early in the amplification process. However PCR is required to reconstruct genes from larger numbers of fragment as the yield of full-length product from the strand extension reaction drops rapidly as the number of fragments increases.

MAX randomization: beating the bias problem with ordinary oligonucleotides

The appeal of a trinucleotide phosphoramidite based synthesis, or the type of split and mix strategy pursued by Lahr et al. [51], is the removal of codon bias and the ability to include codons for any subset of amino acids at a given position. A recently described oligonucleotide incorporation approach provides many of the same advantages while only requiring simple primers [72]. The MAX system described by Hine and co-workers relies on the annealing of specific oligonucleotides to select the specific subset of codons desired from a template that is completely randomised at the target codons (Figure 4). A template oligonucleotide is synthesised covering the region to be mutagenised, with each target codon completely randomised (NNN). Specific primers are then synthesised that cover the region 5’ to each target codon and terminate with each specific codon that is required for inclusion in the library. Thus if codons for all twenty amino acids are required then twenty ‘selection primers’, one with each codon, are synthesised. A set of primers is synthesised for each codon to be randomised. These primers are then annealed to the template and ligated. The selection primers will only ligate to the primer immediately 5’ when that primer is completely annealed to the template. The selection primers therefore select a subset from the 64 codons in the template. The ligated single strand containing the selection primers is then converted to dsDNA for incorporation into the full-length gene.

The advantage of the MAX system is that, although a sizable number of primers are required, the maximum number will be 20 times the number of codons to be randomized. If three codons are randomized then 60 primers are required, but these 60 can be used to construct a library containing 8000 mutants with no codon bias. Amplification bias is a potential issue if PCR is used to construct the second strand. This can be reduced by the usual methods or by using a simple second-strand synthesis rather than PCR. The MAX system is therefore an excellent means of randomizing multiple codons within a single region. The reconstructed double-stranded DNA can then be either ligated directly into an expression construct or used in strand extension reactions to reconstruct the full-length gene. MAX is not advantageous if a single codon is to be randomized and cannot be used if more than two adjacent codons are to be independently randomized. Again, it is a more complex technique and is therefore less likely to appeal to the general user. However, it allows the construction of unbiased libraries by users without access to a DNA synthesizer and will therefore be extremely valuable where efficient screening of medium to large (103–106 variants) libraries is required.

Summary

Oligonucleotide-directed methods offer a very powerful route to randomizing specific chosen positions and regions within protein encoding DNA sequences. The essence of oligonucleotide-directed methods is that a synthetic DNA sequence is incorporated into the full-length gene. This means that any form of randomization that can be achieved in a synthetic DNA fragment can be replicated in the full-length gene. A wide range of synthetic strategies are available that allow highly precise and controlled randomization within oligonucleotides. However, the majority of these techniques require access to a DNA synthesizer and some require the synthesis of reagents that are not, as yet, commercially available. In most cases randomization of codons to NNN or NNT/G/C, either at saturation levels or at some lower level, will be sufficient.

A range of straightforward techniques is available for incorporating synthetic DNA sequences into full-length genes. Overlap extension and megaprimer protocols are simple to use and are the most popular methods. The incorporation of multiple primers is more complex but can be achieved by a number of methods that are reasonably straightforward to apply. The MAX technique recently described by Hine and coworkers (53) offers an elegant and accessible approach to efficiently constructing libraries where multiple codons are randomized.

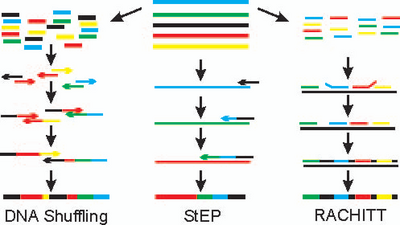

Techniques for recombination of DNA sequences

The methods described above all produce sequence diversity, either along the length of a sequence or at specific positions. Natural evolution however also exploits recombination to bring together advantageous mutations and separate out deleterious mutations. Until 1993 there were no random recombination methods available for directed evolution. The original DNA shuffling technique [9, 10, 73] allowed a step change in what was possible with directed evolution and is still one of the most popular tools in any optimisation strategy. It was now possible to recombine a range of similar genes from different sources, or to combine selected point mutations in novel combinations. A number of other techniques are now available each with their own characteristics and uses including Staggered Extension Process - StEP [11, 74] Random Chimeragenesis on Transient Templates - RACHITT [75, 76] and the various techniques based on Iterative Truncation for the Creation of Hybrid Enzymes - ITCHY[12, 77, 78, 79]. All of these methods are based on linking gene fragments together. In the case of DNA shuffling, RACHITT, and ITCHY these fragments are physically generated by cleavage of the source DNAs and then recombined whereas in StEP the fragments are added to the growing end of a DNA strand in rapid rounds of melting, strand annealing and extension. The sum result for all these techniques is to bring fragments from different source genes into one DNA molecule, recombining the source DNA in new ways to form novel sequences (Figure 5).

DNA shuffling is the most popular of recombination techniques (see [20, 57, 80, 81] for recent examples with experimental details) because it is straightforward to perform. Gene fragments are generated by digesting the source DNA molecules with DNAse. The size of the fragments can be selected to gain some control over the frequency of crossover between source sequences. Other methods for fragment generation such as the use of Endonuclease V or Uracil DNA glycosylase treatment of source DNA with incorporated dUTP [82, 83] or via multiplex PCD [84] have also been reported. The mixture of fragments is then subjected to repeated cycles of melting, annealing, and extension. The quantity of full-length reconstructed sequence produced is very small so the production of a reasonable quantity of full length DNA requires PCR amplification. As the final PCR amplification is performed on a sample that originally contained only gene fragments, a successful amplification generally indicates a successful reconstruction. This makes it easy to tell whether the conditions are correct for reconstruction. However it does not confirm that conditions are optimal for recombination.

StEP [11, 74] also relies on repeated cycles of melting, annealing, and extension to build up the full length gene. However in StEP fragments are added in steps to the end of a growing strand. The growing strand is prevented from reaching its full length by keeping the extension time very short. This results in only partial elongation of a strand in any one extension step. The strand is then melted from its template and may anneal in the next step to a different template leading to a crossover. StEP can be harder for an inexperienced user to set up than DNA shuffling as full-length templates are included in the StEP reaction. This means that the production of full-length template may indicate simple amplification rather than recombination. Balancing the need for yield and recombination can be challenging, as it is not always straightforward to determine whether recombination has occurred. Careful selection, or preparation, of templates to include convenient restriction endonuclease sites will aid optimisation by making it easier to quantify the degree of recombination. Once optimised for a specific thermal cycler, primers, and template, StEP can be easier to perform than DNA shuffling as fewer steps are involved (for examples see [74, 85, 86]). A StEP protocol is available at Nature Protocols [87]

Random chimeragenesis on transient templates (RACHITT [75, 76]) is a technique that is conceptually similar to StEP and DNA shuffling but is designed to produce chimeras with a much larger number of crossovers. In this case the fragments are generated from one strand of all but one of the parental DNAs. These fragments are then reassembled on the full length opposite strand of the remaining parent (the transient template). The fragments are cut back to remove mismatched sections, extended and then ligated to generate full length genes. Finally the template strand is destroyed to leave only the ligated gene fragments to be converted to double-stranded DNA. The advantage of this assembly process is that it creates a greater number of crossovers. This disadvantage lies in the additional steps required; the generation of single strands, the removal of ‘flaps’ of non-annealing DNA, and the removal of the template strand. Care is required in each of these steps as contamination and carryover can lead to contamination of the library with parental sequence. Nonetheless RACHITT is the method of choice if a large number of crossovers at random positions is required.

The major difficulty with both DNA shuffling and StEP is that they rely on the annealing of a growing DNA strand to a template. This annealing is most likely to occur to a template sequence that is very similar to the 3’ end of the growing strand. Sequences can therefore only be recombined when they are similar enough to allow annealing and crossovers will occur preferentially where the template sequences are most similar. It is common for a new user to sequence a number of products from a DNA shuffling reaction only to find that the full-length sequences are identical to the templates. RACHITT, although it does not rely on priming is still limited to the incorporation of sequence elements that are similar to the template. There is now a significant quantity of literature available on computational methods to predict where crossovers will occur, optimisation of sequences to increase the number of crossovers, and prediction of optimal conditions for the recombination reaction [28, 88, 89, 90]. These computational methods can provide a good guide to whether given recombination experiments will work and what the optimal conditions are likely to be.

There is also a wide range of experimental techniques that can improve the chance of recombining sequences with limited homology or increase the distribution of crossover events. Recombination can be forced to occur by eliminating a segment of each template gene from the recombination reaction. For the full length gene to be reconstructed it must combine portions from at least two templates. Restriction digest of each template can be used to force recombination in this manner [91]. An alternative strategy is to use single-stranded DNA templates to prevent the formation of homoduplexes [92, 93]. However the crossover events are still usually restricted to the region of highest homology generating libraries with limited diversity. A complementary approach to increasing the number and distribution of crossover events is therefore to increase the homology of the template genes. This can be achieved by optimising the template gene sequences directly, either by mutagenesis, or by complete gene synthesis. The nucleotide homology of two genes can often be significantly increased without changing the encoded protein sequence, particularly if the template genes are from species with different codon preferences [89]. Again, it is very valuable to design or modify source sequences to contain restriction endonuclease sites that will allow a rapid analysis of the degree of recombination that has occurred.

Recombination can also be increased by including synthetic oligonucleotides that combine sequence elements from two different templates either in a separate amplification step [94] or in the shuffling reaction itself. This is, in a sense, a method of directing crossover events that bears the same relation to the generation of random crossover events as oligonucleotide directed mutagenesis does to random mutagenesis. Thus each oligonucleotide will direct one specific crossover event and each desired crossover requires an oligonucleotide. This strategy is effective in combination with DNA shuffling in generating a much broader selection of crossover events. The methodology is taken to its logical extreme with the Synthetic Shuffling method described by Ness et al. [70] and the Assembly of Designed Oligonucleotides (ADO) method described by Reetz and co-workers [93]. In both approaches synthetic oligonucleotides are designed based on template sequences to generate recombined full-length genes entirely from synthetic DNA. The advantage of using entirely synthetic oligonucleotides is that absolute control over the synthesis procedure gives absolute control over the template sequence, position of crossovers, and also allows the introduction of specific point mutations.

Recombination without homology

The difficulty of generating recombination events where there is little sequence homology between template genes has lead to the development of a number of techniques that do not require strand extension or annealing to a template. These methods are not appropriate for the recombination of point mutations, the most common use of DNA shuffling, but are useful where it is desirable to generate hybrids of genes that share little DNA sequence similarity. For instance Ostermeier et al., created hybrids of human and bacterial glycinamide ribonucleotide transformylase enzymes [77]. These enzymes have functional similarities and it was therefore of interest to examine hybrid enzymes. However the genes share only 50% sequence homology.

The first description of a general technique for recombining non-homologous sequences was by Benkovic and coworkers. The method, termed incremental truncation for the creation of hybrid enzymes (ITCHY), is based on the direct ligation of libraries of fragments generated by the truncation of two template sequences, with each template being truncated from the opposite end. Fragments of one template that have been digested from the 5'-end of the gene are ligated to fragments of the second template that have been digested from the 3'-end. This ligation process removes any need for homology at the point of crossover, but the result of this is usually that the connection is made at random. Thus the DNA fragments may not be connected in a way that is at all analogous to their position in the template gene and may be ligated out of frame, generating a nonsense product. The potential for generating out-of-frame products restricts the number of crossover events to one or perhaps two.

In the initial version of ITCHY this incremental truncation was performed via timed exonuclease digestions. This proved difficult to control and optimise so an improved procedure was developed where initial templates are generated with phosphorothioate linkages incorporated at random along the length of the gene [77]. Complete exonuclease digestion then generates fragments with lengths determined by the position of the nuclease resistant phosphorothioate linkage. This method, named thio-ITCHY, is much more straightforward to perform. Two variants are described differing mainly in the way in which the phosphorothioates are incorporated but for most users PCR-based incorporation is probably the most straightforward. Plasmid constructs to facilitate the use of these methods and for removing out of frame ligation products are also described [77, 95]. Libraries generated using ITCHY can be used as templates for further recombination by DNA shuffling to generate a hybrids with more than one crossover [79, 96, 97].

An alternative approach is described by Bittker et al [98] which simplified the fragment preparation process and also allows for the generation of multple random crossover points. The approach involves the direct ligation of 5' phosporylated blunt ended fragments by DNA ligase. To make this approach practical it was necessary to ensure that intermolecular ligation was favoures, which was achieved by the addition of polyethylene glycol. In addition a hairpin DNA is also added to the ligation reaction to both act as a stopper, providing control over the length of recombinants formed, and as a PCR primer binding site for amplification of the recombinant DNA. This method again provides completely random recombination.

A number of papers have described general methods for the defined recombination of parental sequences. These methods generate crossovers at specific positions and do not rely on any sequence homology between the parents. O’Maille et al. [99] designed primers for the amplification of specific gene fragments from each parent that could then be reassembled by an overlap extension approach. The overlaps here were designed manually based on the structural and sequence homology of the parents. Hiraga and Arnold [100] have developed a method based on the insertion of tag sequences containing type II restriction enzyme sites into the parent genes. The tag sequences are designed to provide for the easy generation of specific fragments by the use of a single restriction enzyme and the correct reassembly of fragments via the different overhangs yielded by the type II restriction enzyme. The issue with such directed methods is the choice of where crossovers between parental sequences should be placed. Arnold and co-workers have reported and validated a general approach to identifying optimal crossover sites based on a computational analysis of the structures of the parental proteins to identify regions with minimal interactions with the rest of the protein [101, 102] whereas O’Maille et al. design the crossover points manually. In both cases structural information is important and in many cases this will either be available or can be inferred from structure and sequence alignments.

Summary

A range of different techniques is available for recombining diverse sequences. DNA shuffling remains the most popular technique. It is an effective way of recombining sequences with high homology and is easy to set up and perform. A whole toolkit of techniques has grown up around DNA shuffling with methods to increase recombination between less related sequences and optimise sequences and conditions for optimal recombination. StEP is a broadly similar technique to DNA shuffling although the implementation is very different. Both DNA shuffling and StEP suffer from one major problem; recombination is limited to parental genes with very similar sequences and crossover events are strongly biased towards regions of highest sequence similarity. A particular problem when attempting to recombine a number of point mutants of an original sequence is that the majority of recombination products will be either the original wildtype sequence or the unrecombined point mutants [103]. Techniques to overcome this problem generally rely on the inclusion of synthetic oligonucleotides in the shuffling reaction to encourage specific crossover events [94] or the exclusion of specific regions of template genes from the final product [91]. The use of synthetic oligonucleotides reaches its logical conclusion in methods where recombination is performed between entirely synthetic sequences allowing optimisation of the template DNA sequence, crossover points, and the addition of further point randomisation if desired [69, 70]. Benkovic and co-workers have described a range of related techniques that allow recombination between two unrelated template sequences. These methods rely on truncation of the two templates from opposite ends followed by religation of the remaining fragments together. The method is effective but is limited to products with a single recombination event between two template sequences. Recombination at any set of specific positions can be performed by primer-based methods such as strand extension or by the incorporation of specific restriction enzyme sites.

Overall, DNA shuffling looks set to remain the most popular method for recombination. The combination of error-prone PCR followed by shuffling of selected mutants with improved function is the most commonly followed strategy for directed evolution experiments. DNA shuffling has drawbacks and creates biased libraries but equally has produced good results. The occasional user will find DNA shuffling the most straightforward method to use. Again, specific experiments that require less bias or more efficient libraries and those that require statistical analysis may require either improved or modified techniques. General users will require experimental demonstration that the more complex techniques are required for their specific application.

Design considerations and computational tools

As directed evolution has matured over the past ten years a body of knowledge has been built up that is beginning to provide a clear view of the most efficient and effective ways of planning library design. There has been gradual move away from what is not the conventional approach of several rounds error-prone PCR and selection followed by DNA shuffling to more directed approaches which have borne fruit in the selection of more challenging targets (reetz recent papers and arnold directed shuffling). However the detailed analysis of successes and failures has been limited by a lack of thorough characterisation of libraries and the limited availability of tools to predict the content of libraries.

A range of computational tools are now available to aid in the design and analysis of directed evolution experiments. Some of these are available as web services particularly those developed by Firth and Patrick [104, 105, 106] which include tools for analysing epPCR, recombination, and other methods (Web Server here). The Library Diversity Programme developed by Volles and Landsbury [31] focuses on the analysis of random mutation by epPCR, chemical, or oligonucleotide incorporation (available on web here).

It remains to be shown in a general way how best to use these programs in library design. However they are very useful in defining target library sizes and diversities. By balancing the desired diversity against the cost of screening it is possible optimise the library design parameters.

Patent and licensing issues

The targets of most directed evolution experiments are usually technologically based and often have some commercial value. It is therefore worth noting which randomization methods are protected by patent. The underlying use of PCR in the majority of these methods is covered by the original patents for the polymerase chain reaction held by Hoffman–La Roche. It is however, worth noting that some commercially available thermostable polymerases, such as Vent from New England Biolabs, are apparently not licensed for PCR. The use of Mn2+, biased nucleotides and dNTP analogues to increase the error rate of PCR amplification is not protected. The DNA polymerase on which the Stratagene GeneMorph mutagenesis system is based is not apparently patented for the purpose of mutagenesis. The standard license agreement for use of the GeneMorph system is not limited according to the instruction manual. Both DNA shuffling and StEP have been protected. Patents for DNA shuffling (US5605793, US5830721 and US6506603) including synthetic shuffling (US6521453) are held by Affymax Technologies and Maxygen, while those for StEP (US6153410 and US6177263) are held by the California Institute of Technology. As these techniques form such a crucial part of virtually all directed evolution experiments it may be useful to consider licensing issues when deciding on which method to use where any commercial outcome is expected. The various ITCHY methods have not been patented.

Of the more recently described and more complex methodologies, the RID method for the generation of random insertions and deletions and the ADO method for recombination have not been patented. The MAX method, useful in the generation of unbiased libraries containing multiple randomized positions, has been protected (WO00/15777, held by Aston University and Amersham Pharmacia Biotech, and WO 03/106679, held by Aston University). The use of trinucleotide (and dinucleotide) phosphoramidites in the synthesis of mutagenic primers is covered by patents held by Maxygen (US6436675). Diversa also claims a patent on the use of a complete set of primers to mutagenize every position in a gene (US6562594).

Conclusion

The vast majority of reported directed evolution experiments use a combination of error-prone PCR and DNA shuffling, sometimes combined with primer-based saturation mutagenesis, to construct the initial library and subsequent libraries for each cycle of selection. These techniques are straightforward and have been successfully applied to the optimization of a range of protein activities including binding, stability and enzyme selectivity. The challenge now lies in pushing back the boundaries of what can be achieved using directed evolution. Tackling these challenges may require the construction of new types of libraries and more efficiently constructed libraries of types already available. A large toolkit of methods has recently become available that makes possible the construction of these novel and highly efficient libraries. These methods are necessarily more complex than error-prone PCR, DNA shuffling and oligonucleotide-based mutagenesis, and are therefore unlikely to be the first choice of the general user. However, they are likely to come into their own where the easy methods have failed. They will also be valuable for those groups working to develop optimized and rational approaches to directed evolution. It is not, as yet, clear which of these new techniques will be most valuable or most popular. However, the ability to generate and combine such a wide range of sequence diversity is an important staging post of the route to developing the full promise of the technology of directed evolution.

References

- Farinas ET, Bulter T, and Arnold FH. Directed enzyme evolution. Curr Opin Biotechnol. 2001 Dec;12(6):545-51. DOI:10.1016/s0958-1669(01)00261-0 |

Reetz MT, Rentzsch M, Pletsch A, Maywald M, Towards the Directed Evolution of Hybrid Catalysts, Chimia 2002, 56, 721-723

- Taylor SV, Kast P, and Hilvert D. Investigating and Engineering Enzymes by Genetic Selection. Angew Chem Int Ed Engl. 2001 Sep 17;40(18):3310-3335. DOI:10.1002/1521-3773(20010917)40:18<3310::aid-anie3310>3.0.co;2-p |

- Waldo GS. Genetic screens and directed evolution for protein solubility. Curr Opin Chem Biol. 2003 Feb;7(1):33-8. DOI:10.1016/s1367-5931(02)00017-0 |

- Tao H and Cornish VW. Milestones in directed enzyme evolution. Curr Opin Chem Biol. 2002 Dec;6(6):858-64. DOI:10.1016/s1367-5931(02)00396-4 |

- Lin H and Cornish VW. Screening and selection methods for large-scale analysis of protein function. Angew Chem Int Ed Engl. 2002 Dec 2;41(23):4402-25. DOI:10.1002/1521-3773(20021202)41:23<4402::AID-ANIE4402>3.0.CO;2-H |

- ISBN:158829286X

- ISBN:1588292851

- Stemmer WP. DNA shuffling by random fragmentation and reassembly: in vitro recombination for molecular evolution. Proc Natl Acad Sci U S A. 1994 Oct 25;91(22):10747-51. DOI:10.1073/pnas.91.22.10747 |

- Stemmer WP. Rapid evolution of a protein in vitro by DNA shuffling. Nature. 1994 Aug 4;370(6488):389-91. DOI:10.1038/370389a0 |

- Zhao H, Giver L, Shao Z, Affholter JA, and Arnold FH. Molecular evolution by staggered extension process (StEP) in vitro recombination. Nat Biotechnol. 1998 Mar;16(3):258-61. DOI:10.1038/nbt0398-258 |

- Ostermeier M, Shim JH, and Benkovic SJ. A combinatorial approach to hybrid enzymes independent of DNA homology. Nat Biotechnol. 1999 Dec;17(12):1205-9. DOI:10.1038/70754 |

- Bornscheuer UT, Altenbuchner J, and Meyer HH. Directed evolution of an esterase: screening of enzyme libraries based on pH-indicators and a growth assay. Bioorg Med Chem. 1999 Oct;7(10):2169-73. DOI:10.1016/s0968-0896(99)00147-9 |

- Alexeeva M, Enright A, Dawson MJ, Mahmoudian M, and Turner NJ. Deracemization of alpha-methylbenzylamine using an enzyme obtained by in vitro evolution. Angew Chem Int Ed Engl. 2002 Sep 2;41(17):3177-80. DOI:10.1002/1521-3773(20020902)41:17<3177::AID-ANIE3177>3.0.CO;2-P |

- Nguyen AW and Daugherty PS. Production of randomly mutated plasmid libraries using mutator strains. Methods Mol Biol. 2003;231:39-44. DOI:10.1385/1-59259-395-X:39 |

- Cadwell RC and Joyce GF. Mutagenic PCR. PCR Methods Appl. 1994 Jun;3(6):S136-40. DOI:10.1101/gr.3.6.s136 |

- Cirino PC, Mayer KM, and Umeno D. Generating mutant libraries using error-prone PCR. Methods Mol Biol. 2003;231:3-9. DOI:10.1385/1-59259-395-X:3 |

- Matsumura I and Ellington AD. In vitro evolution of beta-glucuronidase into a beta-galactosidase proceeds through non-specific intermediates. J Mol Biol. 2001 Jan 12;305(2):331-9. DOI:10.1006/jmbi.2000.4259 |

- Lingen B, Grötzinger J, Kolter D, Kula MR, and Pohl M. Improving the carboligase activity of benzoylformate decarboxylase from Pseudomonas putida by a combination of directed evolution and site-directed mutagenesis. Protein Eng. 2002 Jul;15(7):585-93. DOI:10.1093/protein/15.7.585 |

- Bessler C, Schmitt J, Maurer KH, and Schmid RD. Directed evolution of a bacterial alpha-amylase: toward enhanced pH-performance and higher specific activity. Protein Sci. 2003 Oct;12(10):2141-9. DOI:10.1110/ps.0384403 |

- Zaccolo M, Williams DM, Brown DM, and Gherardi E. An approach to random mutagenesis of DNA using mixtures of triphosphate derivatives of nucleoside analogues. J Mol Biol. 1996 Feb 2;255(4):589-603. DOI:10.1006/jmbi.1996.0049 |

- Zaccolo M and Gherardi E. The effect of high-frequency random mutagenesis on in vitro protein evolution: a study on TEM-1 beta-lactamase. J Mol Biol. 1999 Jan 15;285(2):775-83. DOI:10.1006/jmbi.1998.2262 |

- Kobayashi A, Kitaoka M, and Hayashi K. Novel PCR-mediated mutagenesis employing DNA containing a natural abasic site as a template and translesional Taq DNA polymerase. J Biotechnol. 2005 Mar 30;116(3):227-32. DOI:10.1016/j.jbiotec.2004.10.016 |

- Kopsidas G, Carman RK, Stutt EL, Raicevic A, Roberts AS, Siomos MA, Dobric N, Pontes-Braz L, and Coia G. RNA mutagenesis yields highly diverse mRNA libraries for in vitro protein evolution. BMC Biotechnol. 2007 Apr 11;7:18. DOI:10.1186/1472-6750-7-18 |

- Fujii R, Kitaoka M, and Hayashi K. One-step random mutagenesis by error-prone rolling circle amplification. Nucleic Acids Res. 2004 Oct 26;32(19):e145. DOI:10.1093/nar/gnh147 |

- Fujii R, Kitaoka M, and Hayashi K. Error-prone rolling circle amplification: the simplest random mutagenesis protocol. Nat Protoc. 2006;1(5):2493-7. DOI:10.1038/nprot.2006.403 |

- Matsumura I and Rowe LA. Whole plasmid mutagenic PCR for directed protein evolution. Biomol Eng. 2005 Jun;22(1-3):73-9. DOI:10.1016/j.bioeng.2004.10.004 |

- Patrick WM, Firth AE, and Blackburn JM. User-friendly algorithms for estimating completeness and diversity in randomized protein-encoding libraries. Protein Eng. 2003 Jun;16(6):451-7. DOI:10.1093/protein/gzg057 |

- Rowe LA, Geddie ML, Alexander OB, and Matsumura I. A comparison of directed evolution approaches using the beta-glucuronidase model system. J Mol Biol. 2003 Sep 26;332(4):851-60. DOI:10.1016/s0022-2836(03)00972-0 |

- Vanhercke T, Ampe C, Tirry L, and Denolf P. Reducing mutational bias in random protein libraries. Anal Biochem. 2005 Apr 1;339(1):9-14. DOI:10.1016/j.ab.2004.11.032 |

- Volles MJ and Lansbury PT Jr. A computer program for the estimation of protein and nucleic acid sequence diversity in random point mutagenesis libraries. Nucleic Acids Res. 2005;33(11):3667-77. DOI:10.1093/nar/gki669 |

- Miyazaki K and Arnold FH. Exploring nonnatural evolutionary pathways by saturation mutagenesis: rapid improvement of protein function. J Mol Evol. 1999 Dec;49(6):716-20. DOI:10.1007/pl00006593 |

- Shortle D and Sondek J. The emerging role of insertions and deletions in protein engineering. Curr Opin Biotechnol. 1995 Aug;6(4):387-93. DOI:10.1016/0958-1669(95)80067-0 |

- Osuna J, Yáñez J, Soberón X, and Gaytán P. Protein evolution by codon-based random deletions. Nucleic Acids Res. 2004 Sep 30;32(17):e136. DOI:10.1093/nar/gnh135 |

- Murakami H, Hohsaka T, and Sisido M. Random insertion and deletion of arbitrary number of bases for codon-based random mutation of DNAs. Nat Biotechnol. 2002 Jan;20(1):76-81. DOI:10.1038/nbt0102-76 |

- Murakami H, Hohsaka T, and Sisido M. Random insertion and deletion mutagenesis. Methods Mol Biol. 2003;231:53-64. DOI:10.1385/1-59259-395-X:53 |

- Hayes F and Hallet B. Pentapeptide scanning mutagenesis: encouraging old proteins to execute unusual tricks. Trends Microbiol. 2000 Dec;8(12):571-7. DOI:10.1016/s0966-842x(00)01857-6 |

- Pikkemaat MG and Janssen DB. Generating segmental mutations in haloalkane dehalogenase: a novel part in the directed evolution toolbox. Nucleic Acids Res. 2002 Apr 15;30(8):E35-5. DOI:10.1093/nar/30.8.e35 |

- Jones DD. Triplet nucleotide removal at random positions in a target gene: the tolerance of TEM-1 beta-lactamase to an amino acid deletion. Nucleic Acids Res. 2005 May 16;33(9):e80. DOI:10.1093/nar/gni077 |

- Simm AM, Baldwin AJ, Busse K, and Jones DD. Investigating protein structural plasticity by surveying the consequence of an amino acid deletion from TEM-1 beta-lactamase. FEBS Lett. 2007 Aug 21;581(21):3904-8. DOI:10.1016/j.febslet.2007.07.018 |

- Ward B and Juehne T. Combinatorial library diversity: probability assessment of library populations. Nucleic Acids Res. 1998 Feb 15;26(4):879-86. DOI:10.1093/nar/26.4.879 |

- Palfrey D, Picardo M, and Hine AV. A new randomization assay reveals unexpected elements of sequence bias in model 'randomized' gene libraries: implications for biopanning. Gene. 2000 Jun 13;251(1):91-9. DOI:10.1016/s0378-1119(00)00206-7 |

- Virnekäs B, Ge L, Plückthun A, Schneider KC, Wellnhofer G, and Moroney SE. Trinucleotide phosphoramidites: ideal reagents for the synthesis of mixed oligonucleotides for random mutagenesis. Nucleic Acids Res. 1994 Dec 25;22(25):5600-7. DOI:10.1093/nar/22.25.5600 |

Zehl A, Starke A, Cech D, Hartsch T, Merkl R, Fritz HJ, Efficient and flexible access to fully protected trinucleotides suitable for DNA synthesis by automated phosphoramidite chemistryI, Chem Comm 1996, 23, 2677-2678

- Kayushin AL, Korosteleva MD, Miroshnikov AI, Kosch W, Zubov D, and Piel N. A convenient approach to the synthesis of trinucleotide phosphoramidites--synthons for the generation of oligonucleotide/peptide libraries. Nucleic Acids Res. 1996 Oct 1;24(19):3748-55. DOI:10.1093/nar/24.19.3748 |

- Kayushin A, Korosteleva M, and Miroshnikov A. Large-scale solid-phase preparation of 3'-unprotected trinucleotide phosphotriesters--precursors for synthesis of trinucleotide phosphoramidites. Nucleosides Nucleotides Nucleic Acids. 2000 Oct-Dec;19(10-12):1967-76. DOI:10.1080/15257770008045471 |

- Gaytán P, Yáñez J, Sánchez F, and Soberón X. Orthogonal combinatorial mutagenesis: a codon-level combinatorial mutagenesis method useful for low multiplicity and amino acid-scanning protocols. Nucleic Acids Res. 2001 Feb 1;29(3):E9. DOI:10.1093/nar/29.3.e9 |

- Gaytán P, Yañez J, Sánchez F, Mackie H, and Soberón X. Combination of DMT-mononucleotide and Fmoc-trinucleotide phosphoramidites in oligonucleotide synthesis affords an automatable codon-level mutagenesis method. Chem Biol. 1998 Sep;5(9):519-27. DOI:10.1016/s1074-5521(98)90007-2 |

- Gaytán P, Osuna J, and Soberón X. Novel ceftazidime-resistance beta-lactamases generated by a codon-based mutagenesis method and selection. Nucleic Acids Res. 2002 Aug 15;30(16):e84. DOI:10.1093/nar/gnf083 |

- Osuna J, Yáñez J, Soberón X, and Gaytán P. Protein evolution by codon-based random deletions. Nucleic Acids Res. 2004 Sep 30;32(17):e136. DOI:10.1093/nar/gnh135 |

- Lahr SJ, Broadwater A, Carter CW Jr, Collier ML, Hensley L, Waldner JC, Pielak GJ, and Edgell MH. Patterned library analysis: a method for the quantitative assessment of hypotheses concerning the determinants of protein structure. Proc Natl Acad Sci U S A. 1999 Dec 21;96(26):14860-5. DOI:10.1073/pnas.96.26.14860 |

- Glaser SM, Yelton DE, and Huse WD. Antibody engineering by codon-based mutagenesis in a filamentous phage vector system. J Immunol. 1992 Dec 15;149(12):3903-13.

- Liebeton K, Zonta A, Schimossek K, Nardini M, Lang D, Dijkstra BW, Reetz MT, and Jaeger KE. Directed evolution of an enantioselective lipase. Chem Biol. 2000 Sep;7(9):709-18. DOI:10.1016/s1074-5521(00)00015-6 |

- Sakamoto T, Joern JM, Arisawa A, and Arnold FH. Laboratory evolution of toluene dioxygenase to accept 4-picoline as a substrate. Appl Environ Microbiol. 2001 Sep;67(9):3882-7. DOI:10.1128/AEM.67.9.3882-3887.2001 |

- Horsman GP, Liu AM, Henke E, Bornscheuer UT, and Kazlauskas RJ. Mutations in distant residues moderately increase the enantioselectivity of Pseudomonas fluorescens esterase towards methyl 3bromo-2-methylpropanoate and ethyl 3phenylbutyrate. Chemistry. 2003 May 9;9(9):1933-9. DOI:10.1002/chem.200204551 |

- Leemhuis H, Rozeboom HJ, Wilbrink M, Euverink GJ, Dijkstra BW, and Dijkhuizen L. Conversion of cyclodextrin glycosyltransferase into a starch hydrolase by directed evolution: the role of alanine 230 in acceptor subsite +1. Biochemistry. 2003 Jun 24;42(24):7518-26. DOI:10.1021/bi034439q |

- Wada M, Hsu CC, Franke D, Mitchell M, Heine A, Wilson I, and Wong CH. Directed evolution of N-acetylneuraminic acid aldolase to catalyze enantiomeric aldol reactions. Bioorg Med Chem. 2003 May 1;11(9):2091-8. DOI:10.1016/s0968-0896(03)00052-x |

- Juillerat A, Gronemeyer T, Keppler A, Gendreizig S, Pick H, Vogel H, and Johnsson K. Directed evolution of O6-alkylguanine-DNA alkyltransferase for efficient labeling of fusion proteins with small molecules in vivo. Chem Biol. 2003 Apr;10(4):313-7. DOI:10.1016/s1074-5521(03)00068-1 |

- Sio CF, Riemens AM, van der Laan JM, Verhaert RM, and Quax WJ. Directed evolution of a glutaryl acylase into an adipyl acylase. Eur J Biochem. 2002 Sep;269(18):4495-504. DOI:10.1046/j.1432-1033.2002.03143.x |

- Georgescu R, Bandara G, and Sun L. Saturation mutagenesis. Methods Mol Biol. 2003;231:75-83. DOI:10.1385/1-59259-395-X:75 |

- Tyagi R, Lai R, and Duggleby RG. A new approach to 'megaprimer' polymerase chain reaction mutagenesis without an intermediate gel purification step. BMC Biotechnol. 2004 Feb 26;4:2. DOI:10.1186/1472-6750-4-2 |

- Miyazaki K and Takenouchi M. Creating random mutagenesis libraries using megaprimer PCR of whole plasmid. Biotechniques. 2002 Nov;33(5):1033-4, 1036-8. DOI:10.2144/02335st03 |

- Miyazaki K. Creating random mutagenesis libraries by megaprimer PCR of whole plasmid (MEGAWHOP). Methods Mol Biol. 2003;231:23-8. DOI:10.1385/1-59259-395-X:23 |

- Zheng L, Baumann U, and Reymond JL. An efficient one-step site-directed and site-saturation mutagenesis protocol. Nucleic Acids Res. 2004 Aug 10;32(14):e115. DOI:10.1093/nar/gnh110 |

- Wu W, Jia Z, Liu P, Xie Z, and Wei Q. A novel PCR strategy for high-efficiency, automated site-directed mutagenesis. Nucleic Acids Res. 2005 Jul 19;33(13):e110. DOI:10.1093/nar/gni115 |

- Ko JK and Ma J. A rapid and efficient PCR-based mutagenesis method applicable to cell physiology study. Am J Physiol Cell Physiol. 2005 Jun;288(6):C1273-8. DOI:10.1152/ajpcell.00517.2004 |

- Seyfang A and Jin JH. Multiple site-directed mutagenesis of more than 10 sites simultaneously and in a single round. Anal Biochem. 2004 Jan 15;324(2):285-91. DOI:10.1016/j.ab.2003.10.012 |

- Liu L and Lomonossoff G. A site-directed mutagenesis method utilising large double-stranded DNA templates for the simultaneous introduction of multiple changes and sequential multiple rounds of mutation: Application to the study of whole viral genomes. J Virol Methods. 2006 Oct;137(1):63-71. DOI:10.1016/j.jviromet.2006.05.034 |

- Zha D, Eipper A, and Reetz MT. Assembly of designed oligonucleotides as an efficient method for gene recombination: a new tool in directed evolution. Chembiochem. 2003 Jan 3;4(1):34-9. DOI:10.1002/cbic.200390011 |

- Ness JE, Kim S, Gottman A, Pak R, Krebber A, Borchert TV, Govindarajan S, Mundorff EC, and Minshull J. Synthetic shuffling expands functional protein diversity by allowing amino acids to recombine independently. Nat Biotechnol. 2002 Dec;20(12):1251-5. DOI:10.1038/nbt754 |

- Hogrefe HH, Cline J, Youngblood GL, and Allen RM. Creating randomized amino acid libraries with the QuikChange Multi Site-Directed Mutagenesis Kit. Biotechniques. 2002 Nov;33(5):1158-60, 1162, 1164-5. DOI:10.2144/02335pf01 |

- Hughes MD, Nagel DA, Santos AF, Sutherland AJ, and Hine AV. Removing the redundancy from randomised gene libraries. J Mol Biol. 2003 Aug 29;331(5):973-9. DOI:10.1016/s0022-2836(03)00833-7 |

- Joern JM. DNA shuffling. Methods Mol Biol. 2003;231:85-9. DOI:10.1385/1-59259-395-X:85 |

- Aguinaldo AM and Arnold FH. Staggered extension process (StEP) in vitro recombination. Methods Mol Biol. 2003;231:105-10. DOI:10.1385/1-59259-395-X:105 |

- Coco WM, Levinson WE, Crist MJ, Hektor HJ, Darzins A, Pienkos PT, Squires CH, and Monticello DJ. DNA shuffling method for generating highly recombined genes and evolved enzymes. Nat Biotechnol. 2001 Apr;19(4):354-9. DOI:10.1038/86744 |

- Coco WM. RACHITT: Gene family shuffling by Random Chimeragenesis on Transient Templates. Methods Mol Biol. 2003;231:111-27. DOI:10.1385/1-59259-395-X:111 |

- Lutz S, Ostermeier M, and Benkovic SJ. Rapid generation of incremental truncation libraries for protein engineering using alpha-phosphothioate nucleotides. Nucleic Acids Res. 2001 Feb 15;29(4):E16. DOI:10.1093/nar/29.4.e16 |

- Ostermeier M and Lutz S. The creation of ITCHY hybrid protein libraries. Methods Mol Biol. 2003;231:129-41. DOI:10.1385/1-59259-395-X:129 |

- Lutz S and Ostermeier M. Preparation of SCRATCHY hybrid protein libraries: size- and in-frame selection of nucleic acid sequences. Methods Mol Biol. 2003;231:143-51. DOI:10.1385/1-59259-395-X:143 |

- Dixon DP, McEwen AG, Lapthorn AJ, and Edwards R. Forced evolution of a herbicide detoxifying glutathione transferase. J Biol Chem. 2003 Jun 27;278(26):23930-5. DOI:10.1074/jbc.M303620200 |

- Baik SH, Ide T, Yoshida H, Kagami O, and Harayama S. Significantly enhanced stability of glucose dehydrogenase by directed evolution. Appl Microbiol Biotechnol. 2003 May;61(4):329-35. DOI:10.1007/s00253-002-1215-1 |

- Miyazaki K. Random DNA fragmentation with endonuclease V: application to DNA shuffling. Nucleic Acids Res. 2002 Dec 15;30(24):e139. DOI:10.1093/nar/gnf139 |

- Müller KM, Stebel SC, Knall S, Zipf G, Bernauer HS, and Arndt KM. Nucleotide exchange and excision technology (NExT) DNA shuffling: a robust method for DNA fragmentation and directed evolution. Nucleic Acids Res. 2005 Aug 1;33(13):e117. DOI:10.1093/nar/gni116 |